January 21, 2025 | Josh LaMarAI Transparency

framework

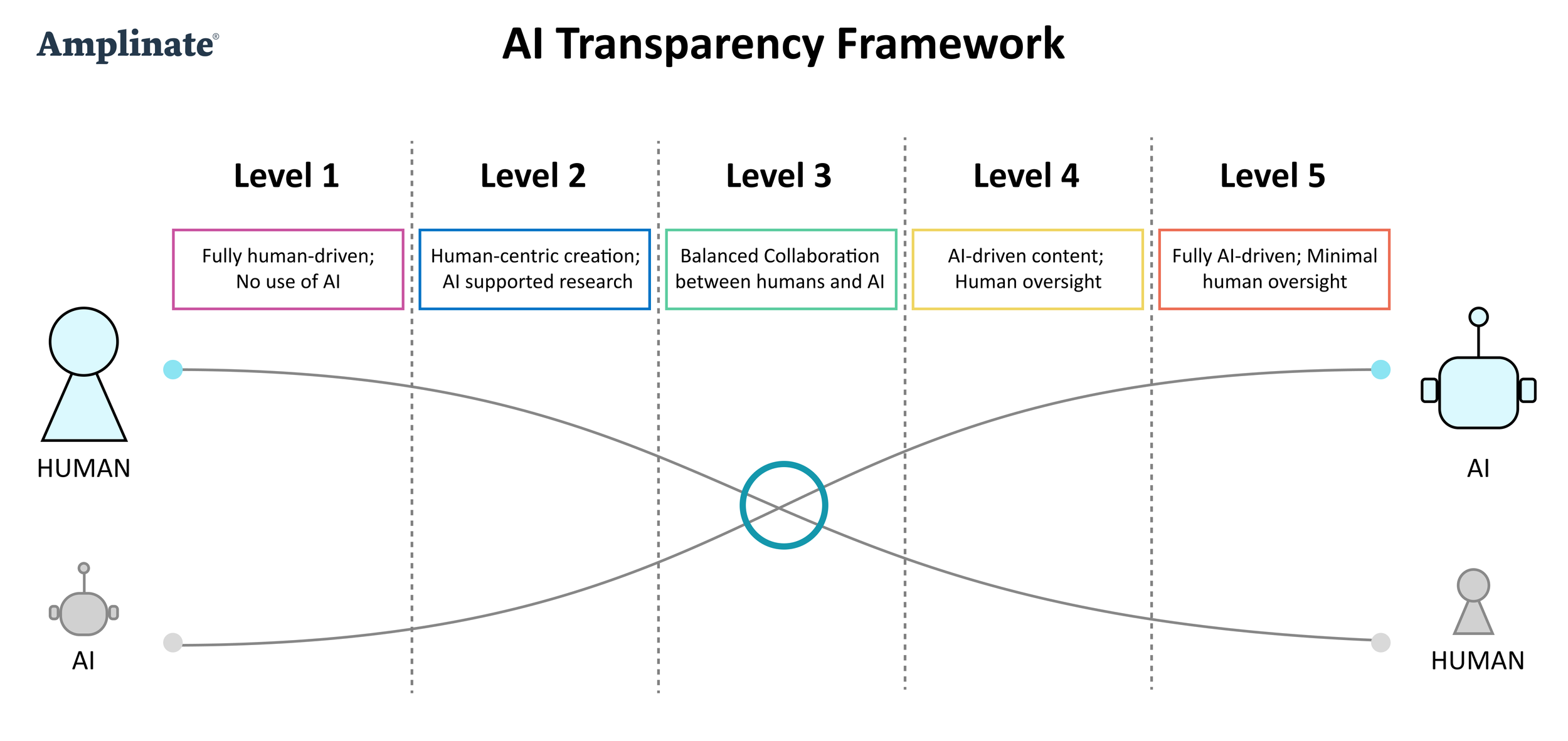

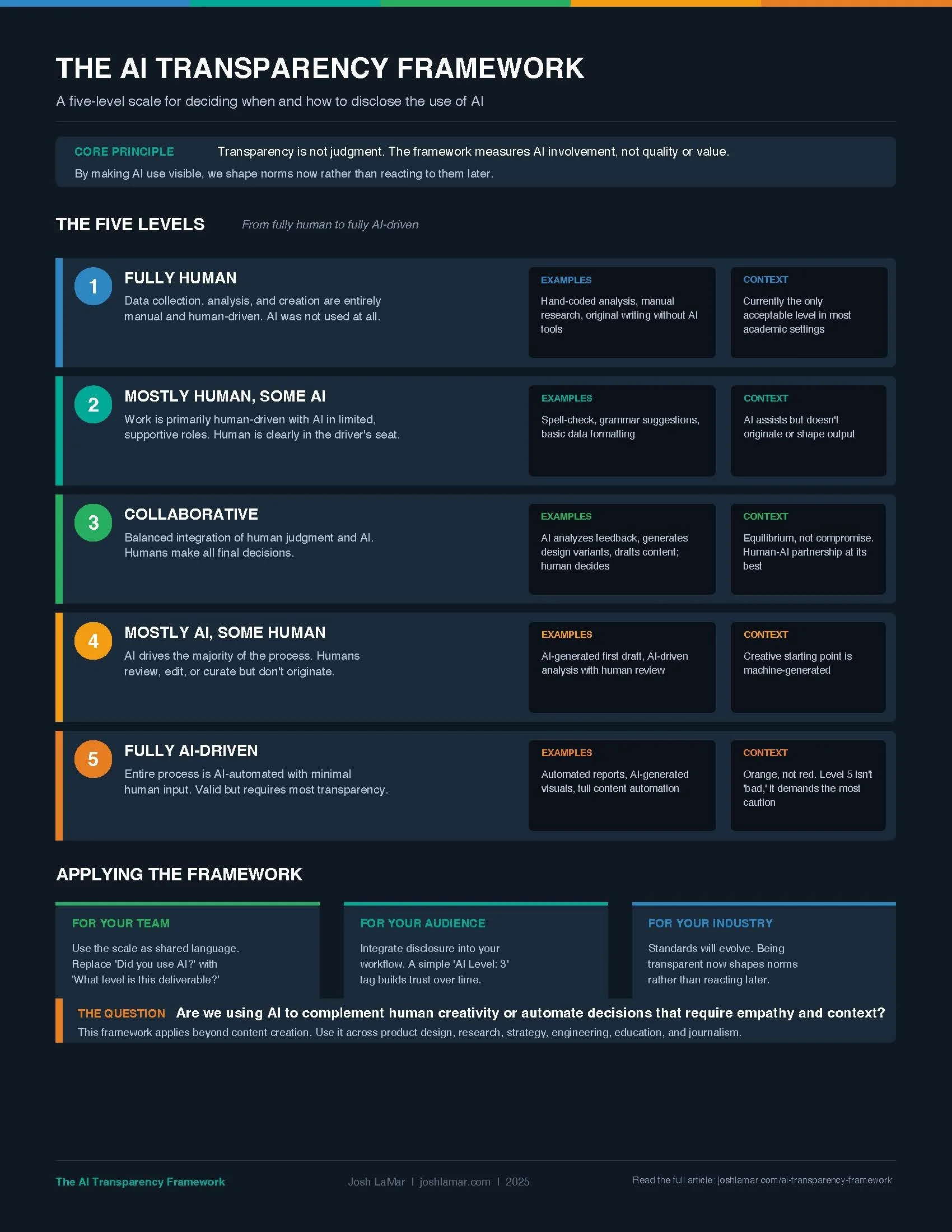

A five-level scale for deciding when and how to disclose the use of AI.

The Problem

AI is reshaping how we work and create. Tools like ChatGPT and Claude have made content creation faster and more efficient. But this shift raises a question most teams aren't asking: at what point does AI enhancement turn into dependence? And should we disclose our use of it?

The answer isn't binary. "We used AI" or "we didn't" doesn't capture reality anymore. Most work today falls somewhere in between. A designer who uses AI to generate initial layout variants and then refines them by hand is working differently than one who publishes raw AI output. But right now, we treat both the same way: with silence.

The AI Transparency Framework offers a practical, five-level scale for making AI use visible. Not to judge it. To acknowledge it.

The Five Levels

Level 1: Fully Human

Data collection, analysis, and creation are entirely manual and human-driven. AI was not used to assist in the process at all. This is the baseline, and in some contexts like academia, it may currently be the only acceptable level.

Level 4: Mostly AI, Some Human

AI drives the majority of the process. Humans review, edit, or curate the output but don't originate it. The creative or analytical starting point is machine-generated.

Level 2: Mostly Human, Some AI

The work is primarily human-driven, with AI used in limited, supportive ways. Think spell-check, grammar suggestions, or basic data formatting. The human is clearly in the driver's seat.

Level 5: Fully AI-Driven

The entire process is AI-automated with minimal human input. This is valid in many contexts, but it requires the most transparency.

Orange was chosen deliberately over red because Level 5 isn't "bad." It simply demands the most caution when fully outsourcing intellectual work to AI.

Level 3: Collaborative

A balanced integration of human judgment and AI capability. AI assists in tasks like analyzing user feedback, generating design variants, or drafting initial content, with humans making all final decisions. This represents an equilibrium, not a compromise.

Why Transparency,

Not Judgment

The framework is deliberately a spectrum, not a scorecard. There's no "right" level. A fully AI-generated market analysis might be perfectly appropriate. A fully AI-generated medical recommendation probably isn't. Context determines what's responsible.

But here's the thing: AI-generated content is becoming indistinguishable from human-created content. It will only get harder to tell the difference. That's exactly why transparency matters now, before it becomes impossible to reverse-engineer later.

By integrating disclosure into our workflows today, we shape the norms around AI use rather than reacting to them after trust has already eroded. This isn't about slowing down adoption. It's about building trust alongside it.

Beyond

Content Creation

This framework was designed for content creation, but it applies across any discipline where AI is being integrated: product design, research, strategy, engineering, education, journalism. Anywhere humans and AI are collaborating on output that others will consume, the question of transparency applies.

The scale gives teams a shared language. Instead of debating whether using AI is "cheating" or "efficient," you can have a more productive conversation: "This deliverable is a Level 3. Here's what that means."

Download the

One-Page Framework

Cheat Sheet

All five levels on a single page. Color-coded cards with descriptions, examples, and context for each level. Three application prompts for your team, your audience, and your industry.

Pin it to your wall.

Bring it to your next AI governance conversation.

Share it with your team.

Read the

Full Article

The AI Transparency Framework was published in UX Collective in May 2025.

It walks through all five levels in detail, explores how transparency norms will evolve across industries, and makes the case for integrating disclosure into creative and professional workflows now.

About Josh

Josh LaMar is CEO of Amplinate, where he advises on product growth and AI decision strategy. Over 20 years, he has spent 40,000+ hours listening to customers across 19 countries on five continents. He lives between Puerto Vallarta and Paris.

More Frameworks

Subscribe on Substack

Follow Josh on LinkedIn